Define the deviation from the mean to be . Let’s see what we expect that to be. By the linear function rule,

For every random variable, the expected deviation from the mean is 0. The positive deviations exactly cancel out the negative ones.

This cancellation prevents us from understanding how big the deviations are regardless of their sign. But that’s what we need to measure, if we want to measure the distance between the random variable and its expectation .

We have to get rid of the sign of the deviation somehow. One time-honored way of getting rid of the sign of a number is to take the absolute value. The other is to square the number. That’s the method we will use. As you will see, it results in a measure of spread that is crucial for understanding the sums and averages of large samples.

Measuring the rough size of the squared deviations has the advantage that it avoids cancellation between positive and negative errors. The disadvantage is that squared deviations have units that are difficult to understand. The measure of spread that we are about to define takes care of this problem.

🎥 Standard Deviation

12.1.1Standard Deviation¶

Let be a random variable with expectation . The standard deviation of , denoted or , is the root mean square (rms) of deviations from the mean:

has the same units as and . In this chapter we will make precise the sense in which the standard deviation measures the spread of the distribution of about the center .

The quantity inside the square root is called the variance of and has better computational properties than the SD. This turns out to be closely connected to the fact that by Pythagoras’ Theorem, squares of distances combine in useful ways.

Almost invariably, we will calculate standard deviations by first finding the variance and then taking the square root.

Let’s try out the definition of the SD on a random variable that has the distribution defined below.

x = make_array(3, 4, 5)

probs = make_array(0.2, 0.5, 0.3)

dist_X = Table().values(x).probability(probs)

dist_Xdist_X.ev()4.1Here are the squared deviations from the expectation .

sd_table = Table().with_columns(

'x', dist_X.column(0),

'(x - 4.1)**2', (dist_X.column(0)-4.1)**2,

'P(X = x)', dist_X.column(1)

)

sd_tableThe standard deviation of is the square root of the mean squared deviation. The calculation below shows that its numerical value is .

sd_X = np.sqrt(sum(sd_table.column(1)*sd_table.column(2)))

sd_X0.7The prob140 method sd applied to a distribution object returns the standard deviation, saving you the calculation above.

dist_X.sd()0.7Answer

(a) 1

(b) 2

We now know how to calculate the SD. But we don’t yet have a good understanding of what it does. Let’s start developing a few properties that it ought to have. Then we can check if it has them.

First, the SD of a constant should be 0. You should check that this is indeed what the definition implies.

12.1.2Shifting and Scaling¶

The SD is a measure of spread. It’s natural to want measures of spread to remain unchanged if we just shift a probability histogram to the left or right. Such a shift occurs when we add a constant to a random variable. The figure below shows the distribution of the same as above, along with the distribution of . It is clear that should have the same SD as .

dist2 = Table().values(x+5).probability(probs)

Plots('X', dist_X, 'X+5', dist2)

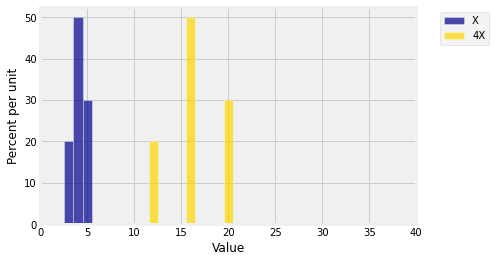

On the other hand, multiplying by a constant results in a distribution that should have a different spread. Here is the distribution of along with the distribution of . The spread of the distribution of appears to be four times as large as that of .

dist3 = Table().values(4*x).probability(probs)

Plots('X', dist_X, '4X', dist3 )

plt.xlim(0, 40);

Multiplying by -4 should have the same effect on the spread as multiplying by 4, as the figure below shows. One histogram is just the mirror image of the other about the vertical axis at 0. There is no change in spread.

dist4 = Table().values(-4*x).probability(probs)

Plots('-4X', dist4, '4X', dist3 )

🎥 SD: Linear Functions

12.1.3Linear Functions¶

The graphs above help us visualize what happens to the SD when the random variable is transformed linearly, for example when changing units of measurement. Let . Then

Notice that the shift has no effect on the variance. This is consistent with what we saw in the first visualization above.

Because the units of the variance are the square of the units of , is times the variance of . That is,

Notice that you get the same answer when the multiplicative constant is as when it is . That is what the two “mirror image” histograms had shown.

In particular, it is very handy to remember that .

Answer

,

12.1.4“Computational” Formula for Variance¶

An algebraic simplification of the formula for variance turns out to be very useful.

Thus the variance is the “mean of the square minus the square of the mean.”

Apart from giving us an alternative way of calculating variance, the formula tells us something about the relation between and . Since variance is non-negative, the formula shows that

with equality only when is a constant.

The formula is often called the “computational” formula for variance. But it can be be numerically inaccurate if the possible values of are large and numerous. For algebraic computation, however, it is very useful, as you will see in the calculations below.

Answer

2

🎥 Variance of Indicator

12.1.5Indicator¶

The values of an indicator random variable are 0 and 1. Each of those two numbers is equal to its square. So if is an indicator, then , and thus

You should check that this variance is largest when . Take the square root to get

12.1.6Uniform¶

Let be uniform on . Then

In the last-but-one step above, we used the formula for the sum of the first squares.

We know that , so

and

By shifting, this is the same as the SD of the uniform distribution on any consecutive integers.